Back in December, a press release1 Press Release “Medical Journal Article: 14,000 U.S. Deaths Tied to Fukushima Reactor Disaster Fallout.” PR Newswire. 19 Dec 2011. <http://www.prnewswire.com/news-releases/medical-journal-article–14000-us-deaths-tied-to-fukushima-reactor-disaster-fallout-135859288.html> was broadcast to the media about a peer reviewed study conducted by Joseph J. Mangano and Janette D. Sherman (M&S) that claimed that at least 14,000 people (with a special focus on children under the age of one) had died in the U.S. during the 14 weeks following the Fukushima disaster.2 Joseph J. Mangano and Janette D. Sherman. “An Unexpected Mortality Increase in the United States Follows Arrival of the Radioactive Plume from Fukushima: Is There a Correlation?” December 2011. International Journal of Health Services. (Accessed 8 Jan 2012.) <http://www.radiation.org/reading/pubs/HS42_1F.pdf> At the time, I ignored the study for several reasons.

- The peer reviewed journal in question is not that notable — ranking 2883 for its impact within the scientific community among all scientific journals, and 29th within its own category of Health Care Sciences and Services.3 Journal-Ranking.com. (Accessed 8 Jan 2012.) <http://www.journal-ranking.com/ranking/listCommonRanking.html?selfCitationWeight=1&externalCitationWeight=1&citingStartYear=1901&journalListId=336> (That doesn’t mean it’s a bad journal — just that other researchers don’t cite it much.)

- The fact that the study results were broadcast through a press release is “odd” to say the least.

- Not many media outlets carried the story. The Sacramento Bee did, but not many others. (The story is now curiously missing from the Bee’s website.)

- No one wrote into the Foundation asking about it.

- And radioactive iodine and cesium just don’t kill that quickly unless the doses are massive. It made no sense.

But recently, things have changed a bit. Several significant alternative health websites have picked up on the study and are trumpeting its “peer reviewed” credentials. What this means, of course, is that questions are starting to stream into the Foundation — questions that now must be answered.

However, before I address the study itself, I need to mention something. I have spent six of the last seven newsletters trashing peer reviewed studies from the medical community that disparaged nutrients and concepts strongly supported in the alternative health community: vitamins E and D, niacin, and that weight gain really results from what you eat, for example. It would be, at the very least, hypocritical to suddenly exhort the virtues of a peer reviewed study simply because its conclusions are in line with beliefs held by many of my associates, without at least putting the study under the same scrutiny that I have exercised when evaluating mainstream studies. And as it turns out, when you actually do that — put the study in question under a magnifying glass — it doesn’t stand up.

That said, let’s look at the study and explore some of the problem areas in it.

Examining the Fukushima “death” study

As Michael Moyer in his Scientific American blog4 Michael Moyer. “Researchers Trumpet Another Flawed Fukushima Death Study.” 20 Dec 2011. Scientific American. (Accessed 8 Jan 2012.) <Researchers Trumpet Another Flawed Fukushima Death Study> points out, the study provides no evidence for one of its fundamental assertions: that significant levels of Fukushima fallout arrived in the United States just six days after the reactor meltdown. The study’s authors provide no evidence for this assertion, nor any citation to back up their facts. Moyer also points out that even the study’s authors acknowledge that the U.S. Environmental Protection Agency’s monitoring of radioactivity in milk, water, and air in the weeks and months following the disaster found relatively few samples that demonstrated any measurable concentrations of radioactivity. But undeterred by this lack of supporting evidence, the authors pull an unsupported conclusion out of their hats and state that “clearly, the 2011 EPA reports cannot be used with confidence for any comprehensive assessment of temporal trends and spatial patterns of U.S. environmental radiation levels originating in Japan.” Or as Moyer sums up their conclusion, “In other words, the EPA didn’t find evidence for the plume that our entire argument depends on, so ‘clearly’ we can’t trust the agency’s data.”

As Michael Moyer in his Scientific American blog4 Michael Moyer. “Researchers Trumpet Another Flawed Fukushima Death Study.” 20 Dec 2011. Scientific American. (Accessed 8 Jan 2012.) <Researchers Trumpet Another Flawed Fukushima Death Study> points out, the study provides no evidence for one of its fundamental assertions: that significant levels of Fukushima fallout arrived in the United States just six days after the reactor meltdown. The study’s authors provide no evidence for this assertion, nor any citation to back up their facts. Moyer also points out that even the study’s authors acknowledge that the U.S. Environmental Protection Agency’s monitoring of radioactivity in milk, water, and air in the weeks and months following the disaster found relatively few samples that demonstrated any measurable concentrations of radioactivity. But undeterred by this lack of supporting evidence, the authors pull an unsupported conclusion out of their hats and state that “clearly, the 2011 EPA reports cannot be used with confidence for any comprehensive assessment of temporal trends and spatial patterns of U.S. environmental radiation levels originating in Japan.” Or as Moyer sums up their conclusion, “In other words, the EPA didn’t find evidence for the plume that our entire argument depends on, so ‘clearly’ we can’t trust the agency’s data.”

Moyer goes on to argue about how the study relies on “sloppy statistics” by using data from 122 cities, 25 to 35 percent of the national total, to project data for the entire country. And he further indicates that others have accused the authors of cherry picking the data. But this all misses the key point: that the data itself is bogus — cherry picked or not. And here we find that SpunkyMonkey in the Physics Forums (a favorite haunt of nuclear engineers) did a great job of exposing this major flaw in the study.5 SpunkyMonkey. 24 Dec 2011. Physics Forums. (Accessed 8 Jan 2012.) <http://www.physicsforums.com/showthread.php?t=562587> And I quote:

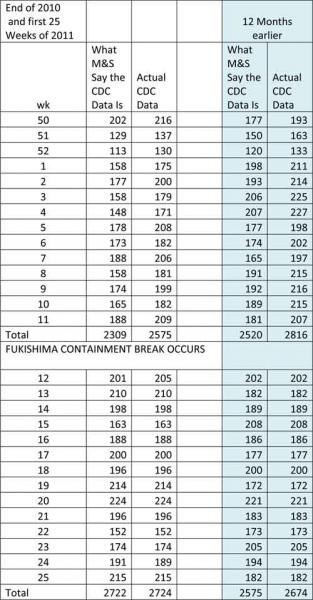

“Immediately seeing major problems with that study by Mangano & Sherman (M&S), I asked a statistician what he thought of it. He crunched the data and while he found several devastating statistical problems, his most remarkable finding was that the U.S. infant-death data M&S report as being from the CDC does not jibe with the actual CDC infant-death data for the same weeks.

“The M&S infant-death data allegedly from the CDC can be seen here (go to Table 3, page 55). And the actual CDC infant-death data can be seen here (go to Locations, scroll down and select Total and press Submit for the data; the data for infants is in the Age column entitled “Less than 1”). The mismatching data sets are included at the end of this post, and with the links I’ve provided here, everything I’m saying can be independently confirmed by the reader.

“Here are the mismatching data sets, note that post-Fuku weeks 15 through 24 do match:

(Note: this is not the actual chart on SpunkyMonkey’s post. His didn’t adjust for the exclusion of Ft. Worth, New Orleans, and Phoenix. M&S state in their report that they pulled those cities from the data since they did not consistently report data for the required timeframes. In truth, it didn’t change things very much. Nevertheless, I did that so we’d have an apples-to-apples comparison. I also included a comparison of the data for the previous 12 months — as that also plays a key role in “proving” M&S’s premise, and the discrepancies are even more egregious.)

“The nature of the mismatch is that all the pre-Fukushima M&S data points are lower than the actual CDC data points and bias the data set to a statistically significant increase in post-Fukushima infant deaths. But in the actual CDC data, there is no statistically significant increase. The statistician also found that even M&S’s data for all-age deaths was in fact not statistically significant, contrary to the claim of M&S.”

(Note how the M&S data and the adjusted CDC data actually tend to match number-for-number post-Fukushima, which pretty much confirms that we’re all working from the same CDC database. Also you will note, the identical sort of discrepancies hold true for the data presented for the previous 12 months — again biasing the data to a statistically significant, but unsupported, increase in deaths from one year to the next.)

“Why the infant data are mismatched is not understood at this time. However, a review of the archived copies of the Morbidity and Mortality Weekly Report archive finds that the historically released data points for the weeks in question jibe with the CDC’s MMWR database. So I see no reason to believe the CDC’s online data are not the true data.”

. . .

The bottom line is that it looks as though the alternative health writers who jumped onboard the Mangano & Sherman Report and pitched its shocking conclusions to their followers were tricked into buying the proverbial pig in a poke. That’s not to say that the study’s conclusions aren’t necessarily true — only that this study doesn’t prove it.

On the other hand, my gut feeling is that the study’s conclusions are not true. There is no compelling evidence that any significant radioactivity made it to the United States. There is no evidence that any noticeable numbers of adults or children in the United States died from anything that could conceivably be connected to exposure to radioactive iodine and cesium. We’re talking about a sudden surge in thyroid cancer (radioactive iodine) and radiation poisoning (cesium). There’s just no evidence that happened. And if there were exposure, it is far, far more likely that any significant increase in deaths would not be seen until several years down the road. Even people who lived near the reactors and received much higher doses of radiation exposure than could possibly have occurred in the U.S. have not started dying yet — certainly not in any noticeable numbers, let alone by the thousands.

Also, even if the numbers used in the study hadn’t been so squirrely, basing conclusions on non-specific increases in deaths is a highly questionable methodology. You’re right back to a variation of the problem encountered in the infamous flu vaccine cohort studies. Or to put it another way, if you accept the study’s premise, you also have to buy into the idea that radiation exposure from the Fukushima plant has caused an increase in deaths in the United States from things such as:

Also, even if the numbers used in the study hadn’t been so squirrely, basing conclusions on non-specific increases in deaths is a highly questionable methodology. You’re right back to a variation of the problem encountered in the infamous flu vaccine cohort studies. Or to put it another way, if you accept the study’s premise, you also have to buy into the idea that radiation exposure from the Fukushima plant has caused an increase in deaths in the United States from things such as:

- Heart disease.

- Lightning strikes.

- Food poisoning.

- Flu.

- Complications from taking prescription drugs.

- Etc.

It boggles the mind.

Conclusion

I know many people live and die on the contents of peer-reviewed studies, but I’m not so sure that’s such a good idea. Until a study has actually been replicated several times, all peer reviewed means is that contemporaries have read the study and concluded that it “looks” good and “seems” to follow proper scientific procedures. The reviewers don’t actually verify the accuracy of the data or replicate the experiments. They will never dig into the data behind the study to see if it is accurately presented. They just assume it is. They’re not evaluating data; they’re evaluating methodology and presentation. Again, if there is a problem with the data itself, that only comes to light if someone tries to replicate the study, or if someone, in this case, SpunkyMonkey and his statistician friend, decide to spend time and peek behind the curtain. In addition, as I’ve pointed out many times before, there are numerous places for bias to slip into a study and totally distort its conclusions.

Does that mean that peer-reviewed studies are useless? Not at all! But they (both those that are pro medicine and those that are pro alternative health) need to be taken as what they are:

- Conducted by human beings with all of their faults.

- Subject to bias.

- Sometimes subject to cheating.

- Based on the best available information…we have at this time.

- Often politically driven.

- Often agenda driven.

- Often dollar driven — as when they are sponsored by pharmaceutical companies.

- And often based on previous studies that were flawed. In legal terms we would call this new study “the fruit of the poisonous tree.” That is to say: any conclusions in the new study drawn from a previous flawed study are, by definition, flawed themselves.

References

| ↑1 | Press Release “Medical Journal Article: 14,000 U.S. Deaths Tied to Fukushima Reactor Disaster Fallout.” PR Newswire. 19 Dec 2011. <http://www.prnewswire.com/news-releases/medical-journal-article–14000-us-deaths-tied-to-fukushima-reactor-disaster-fallout-135859288.html> |

|---|---|

| ↑2 | Joseph J. Mangano and Janette D. Sherman. “An Unexpected Mortality Increase in the United States Follows Arrival of the Radioactive Plume from Fukushima: Is There a Correlation?” December 2011. International Journal of Health Services. (Accessed 8 Jan 2012.) <http://www.radiation.org/reading/pubs/HS42_1F.pdf> |

| ↑3 | Journal-Ranking.com. (Accessed 8 Jan 2012.) <http://www.journal-ranking.com/ranking/listCommonRanking.html?selfCitationWeight=1&externalCitationWeight=1&citingStartYear=1901&journalListId=336> |

| ↑4 | Michael Moyer. “Researchers Trumpet Another Flawed Fukushima Death Study.” 20 Dec 2011. Scientific American. (Accessed 8 Jan 2012.) <Researchers Trumpet Another Flawed Fukushima Death Study> |

| ↑5 | SpunkyMonkey. 24 Dec 2011. Physics Forums. (Accessed 8 Jan 2012.) <http://www.physicsforums.com/showthread.php?t=562587> |

Thank You Jon! What a relief!

Thank You Jon! What a relief! Although the tenseness from the fears of radioactive fallout have apparently fizzled out by now, at least there is a momentary sigh in reading this report that does not add to the fears! In any case the spotlight on the true nature of peer-reviewed articles is very noteworthy! Best! Chef Jem

Dear Jon and readers:

There

Dear Jon and readers:

There IS mounting evidence that increasing amounts of radiation from Fukushima CONTINUES to come into the United States. I can state emphatically that as an atmospheric scientist, I am monitoring the amount of radiation in dispersion flow. Since my specialty is in electromagnetics, the radiation amounts are intense enough to shift the climate patterns. Permit me to explain: moisture coalescence is altered by a shift in atmospheric electromagnetics, and radiative streams can metamorphosize / interfere that equation. The radiation essentially can minimize the grouping of water droplets at the normalized rate without the radiation. Many in mainstream will say this is not true, but I am requesting that we all empower ourselves to learn the truth(s) that continue to show their ugly heads. Because of your influence, it would have been better for you to show how the Fukushima radiation HAS been shown in USA-based milk and other commodities, rather than just concentrate on one study. That is, you could have had a few paragraphs that said something like, “Regardless of this study, there is other mounting evidence in x, y and z areas…” But you did not, and it further leads others to think that you are now siding with the lies, misconstrued news reporting and statistical manipulation that Mainstream and governments are brainwashing the public with day in day out. Thank you for listening. Regards,

Dr. Simon R. R. Atkins, Ph.D., D.Sc. (A.M.)

Thank you, Dr. Atkins, for

Thank you, Dr. Atkins, for discussing this crucial point. Although the study that Jon Barron discusses has little , if any, validity, the bottom line is that the governments, press–those who are secretly controlling the world–don’t want us to know that, for all intents and purposes, the Gulf of Mexico is dead, and the radiation spill from Fukushima is the most serious disaster to threaten our health that I’ve seen in my 68 years.

Dear Ones –

Agreeing there is

Dear Ones –

Agreeing there is importance in Jon’s taking time for this assessment, I feel wisely alerted. Yet, Dr. Simon Atkins – I feel we should hear more from you. Please include me on your mailing list if this is possible, sir. It is notable to me that in those early weeks after the tsunami in Japan, I was able to find online a 3 layered air and ground global radiation flows page showing density and by 3 or 4 types of radioactive particles. I was not able to find it after a few weeks. The site claimed to be from Japanese scientists about projected global activity patterns by air currents and what they knew was happening there – was fascinating to watch as it moved through the week.

It showed US west coast receiving quite a bit. US/California monitoring were notably flakey at best in frequency and location.

One of my (very healthy) students conceived right after the accident, and was ingesting abundant “organic” milk and dark leafy greens, generally 3 times daily. She aborted a few weeks later, and had the remains tested to show there was never any viability and it had distortions. Her respect for my teaching in the training I offered in California on how to adjust radioactive protective foods for pre/postnatal needs gained respect after that. She had felt I was wasting her time at first. It is just one story, and both of us had felt empowered by the report you debunk, Jon. Yet I am still much convinced we live in an ongoing pollution of many forms of radiation, including Fukushima, and have proactive homework to do for selves and clients.

Jon does not dismiss the idea

Jon does not dismiss the idea that radiation is still drifting in from Fukushima. What he does dismiss is fear mongering. Claiming that 14,000 people in the US died in the weeks immediately following containment breach based on “massaged” data does not help the discussion. Jon concentrated on this study because this study is being promoted on many websites as gospel, including some health sites that are among the most visited on the web, with hundreds of thousands of followers.

http://www.youtube.com/watch?

http://www.youtube.com/watch?v=XT6ZCxzW8K4 (nuclear)

Jon,

After reading this article, I would like you to watch this short video about the nuclear issue in the US resulting from Fukushima nuclear reactor disaster.

thanks, I would like to know what you think..

Leah

John,

As always, you put out

John,

As always, you put out outstanding work.

Thanks!

Touché

Touché

You go Jon! I don't have

You go Jon! I don’t have much to say except I appreciate your effort to be a voice for reality here.

what is the worth of

what is the worth of stressing over one naturally started affect on so many greedy people pursuing the American Dream and using selfish, greedy and unsustainable power sources such as Nuclear.

US shoulda done more to stop Nuclear proliferation if they were “downwind”

Balanced views are

Balanced views are appreciated. However, as indicated by Herr Atkins we aren’t out of the woods – and may never be. If I read correctly, he seems to be saying rads in the atmosphere inhibits precipitation due to their effects on water vapor (clustering). From what I’ve seen, chemtrails may do same. Perhaps Mr. Atkins can follow up here.

Thank you for a sensible

Thank you for a sensible article. The ‘scientist’ sure leapt to the defense of the ‘science’, didn’t he? Scientists who depend on grant money will support one another. Your readers are smart enough to spot this ‘peer review’ flag.

IMHO, we have more to be concerned with from local nuclear facilities and other sources of toxic material.Our nuke plants are old and leaky and we now have the problem of fracking effects on our ground water.

A big dose of compassion for the Japanese who are dealing with their disaster is in order.

Di

So Jon, I side with what Dr.

So Jon, I side with what Dr. Simon says. WHO is actually recording any fallout, if they are NOT, then what they say has NO validity. Surely there must be SOME US universities (I do not consider the US Government to be trustworthy) which have radiation monitoring equipment, that IS being used to detect any fallout from Fukushima, so what are their results?

To check on independent

To check on independent volunteer radiation detection, search the site “radiation network dot com”.No abnormal rads detected excluding Japan were noted. I also contacted a local family owned dairy who had their products analysed by an independent lab with no abnormal results.I live in N. Calif bay area.

Hi Jon.

I totally agree and

Hi Jon.

I totally agree and understand the situation with peer reviewed articles and studies in the medical field.

It is a known fact that Dr. Robert Gallo, the man who claimed the discovery of the Human Immunodeficiency Virus (but actually, if anything, stole it from the real discoverer, Luc Montagnier) made that claim with the announcement to the world by then US Secretary of Health and Human Services, Margaret Heckler. He did this without any peer review of his research until much later. An unethical decision that set the wheels in motion to claim the cause of AIDS, which is still controversial to this day.

By the way, Gallo filed a patent for the ‘hiv tests’ that very day and has made millions of dollars in the process.

Just saying. I understand why you would not wish to address the controversy surrounding the HIV=AIDS hypothesis as anyone who does so is ostracized, even black listed by the medical and pharmaceutical community. In many ways, it can be the end of one’s career. That hypothesis is full of holes.

However, the truth is being told around the world and will one day, I believe, come to light for everyone to see what a scam the entire HIV/AIDS monster has become. Initially because of this one fact: The research done by Gallo was accepted as truth by the entire world before there was any peer review. And in fact, other scientists still say to this day that they have not been able to duplicate Gallo’s research, including Peter Duesburg, the scientist who discovered retroviruses and who worked with Gallo at one time.

Gallo himself once said that Duesburg knew more about the retrovirus than anyone alive, yet the medical and pharmaceutical communities have black listed him for his continued statements that HIV is not the cause of AIDS.

Thank you.

Glad to see someone like Jon

Glad to see someone like Jon mention something about this and keep facts separate from speculation. I also found this “news” when it first came out to be on the bizarre side of things. It’s interesting how it’s usually the nonexperts (with all due respect to the deemed experts) that end up calling out what’s wrong.

Unfortunately, it’s sensationalistic news articles like that study, that knock out the credibility on the subject of the r3dia^ion still spewing, which is maybe as twisted as it sounds, was the whole intention of it being put forth. They’re hoping it sounds so outrageous, that maybe the claim about the ongoing r#diation as a REAL problem is seen as coming from a bunch of nutters (which it isn’t).

No one doubts that the effects of F#k^ is going to be accumulating and showing itself slowly over the years, which is why we have to make a few changes for this “new” normal: taking a little clay every day, making sure we’re not iodine-deficient, doing detox baths and eating anti-r#d. foods/herbs. I’ll have to search if there are any posts regarding this, but an article on what to do, if not already on here somewhere, would be really great.

If radiation in the

If radiation in the atmosphere does inhibit precipitation, would that have anything to do with our scant snowfall this winter? They are saying it’s the second year of la nina which tends to be milder than the first, but still… the difference from last winter is pretty substantial.

Dear Jon,

I have presented

Dear Jon,

I have presented with Joseph Mangano on several radios shows, and I also presented this study on Coast to Coast AM radio this past January 12th. I have also written a well-referenced book on the issue ñ Fukushima Meltdown & Modern Radiation: Protecting Ourselves and Our Future Generations – with nearly 500 peer-reviewed references and nearly 200 authoritative citings for a total of 828 references in all. As you correctly note, not all peer-review should be considered sacrosanct when it comes to the natural health care community, but in proper context, it often can and does.

Your critique deserves a few quick responses that I hope will serve your audience in several ways. So, let’s go over this one by one. Your first bullet points state:

The peer reviewed journal in question is not that notable — ranking 2883 for its impact within the scientific community among all scientific journals, and 29th within its own category of Health Care Sciences and Services.3 (That doesn't mean it's a bad journal — just that other researchers don't cite it much.)

The fact that the study results were broadcast through a press release is "odd" to say the least.

Not many media outlets carried the story. The Sacramento Bee did, but not many others. (The story is now curiously missing from the Bee's website.)

No one wrote into the Foundation asking about it.

(continued..)

And radioactive iodine and

And radioactive iodine and cesium just don't kill that quickly unless the doses are massive. It made no sense. First, it makes little difference what peer-reviewed journal an article appears in for several important reasons. First and foremost, the article was put through a complete, top to bottom review by experts to determine the scientific integrity of the article’s design, method, results and conclusions. If memory serves me, this journal was founded by a professor at Johns Hopkins and has been publishing since 1968. Conversely, imagine all the top rated journals such as JAMA and NEJM that knock nutraceuticals regularly with poorly designed studies. I can’t tell you how many times such publications distort the conclusion of studies to the favor of the drug industry. For example, how many times have the alarm bells been sent off regarding the dangers or unproven effects of Vitamin C and E! These are debunked, but not often by follow-up articles in the peer-reviewed community. Why? Because of inherent bias, per and simple. Second, the M&S study was published according to the journal’s schedule. There is a non-profit research foundation (www.radiation.org) which underwrote the time and expense to compose the article and prepare it for submission. So, naturally the non-profit organization issued a press release regarding the peer-reviewed journal’s publication of the article once it had a publication date. Nothing strange here for a non-profit organization to do under such circumstances. (continued to part 3)

(Continued) Third, the media

(Continued) Third, the media has been very interested in such studies, as I mention above, just not initially the so called “main stream.” How long did it take the main stream media to come around and expose the dangers of trans fats or corn syrup or even tobacco for that matter, and now regarding the alarming cancer escalations associated to cell phone use and smart meters? The EU gets it. So does WHO. But we rarely hear about these latter two in the main stream media right now do we? (See: http://doctorapsley.com/PillarIVResources.aspx). How much does the main stream depend upon advertisements from the producers of such products? Denial, normalcy and vested interests are the core reasons why main stream media is among the very LAST to get it with dangers. Fourth, folks are now writing to your foundation about it, including one very knowledgeable PhD. I’d say, that’s a tribute to your foundation. Fifth, radioactive iodine exposure is well known to immediately increase mortality rates in sub-populations who are immune immature or weak due to our constant exposure to opportunistic microbes. This finding is consistent with what occurred here in the U.S. after the Chernobyl catastrophe when slightly over 16,500 similar deaths resulted over the immediate 16 week period. The probability that these deaths were due to random chance was less than 1 in 1 billion! And to date, no other explanation has been brought forward to explain such increased mortalities. But all this should come as no surprise. Americans consume way less iodine than our counterparts in Japan. In fact, the Japanese on coastal areas and even inland are well known to consume 10 to 100 times more iodine daily than our recommended levels. This would tend to initial protect the Japanese population until it all catches up with them a bit further down the road. Iodine which helps form and activate Thyroid hormone is essential for body heat and cellular metabolism.

(…continued) White blood

(…continued) White blood cells metabolize and protect best at optimal body temperatures. For example, studies have shown that even a decrease of 1 degree from the optimal body temperature can lower immunity 20 fold! Unfortunately, once a hot particle of radioactive iodine, called an internal emitter, bonds into thyroid tissues, the free radical production escalates 24/7 for up to 80 days (80 days is the biological impact of a radioactive particle of 131-I, even though it has a mathematical half-life of only 8 days). Such damage will be permanent in many cases. Sixth, the scientific revision process can take too long to correct critiques with little to no merit, but it still must be done. Mr. Mangano and Dr. Janette Sherman, I believe, are writing a follow-up article to address many of the concerns so far raised by the scientific community. This should continue to be a great learning experience for all. In review, with the thyroid becoming damaged, usual and customary exposures to germs tend to go wild, and death rates escalate. This is the first phase. Coincidentally, it was just reported in USA Today that thyroid cancers have been “mysteriously” escalating for decades now (see: http://yourlife.usatoday.com/health/story/2012-01-15/Doctors-unsure-why-thyroid-cancer-cases-on-the-rise/52582694/1). I ask, what is so mysterious about it? The second phase is the future thyroid cancers, leukemias and other cancers that occur 5, 10 and even 20 years later, in silent fashion. But now, we can predict it will and must occur. For example, over 138 nuclear power plants operating normally were studied across the globe to determine what effects if any could be discovered regarding childhood leukemia rates. The results were startling. Dramatic increases in leukemia are found everywhere, in any nation. Also, once the nuclear power plant is decommissioned, these rates plummet. Cause and effect in the book of common sense. (..continued)

(…continued) To get a

(…continued) To get a proper handle on its causation, I suggest reading (1) Hypothyroidism Type 2 by Mark Starr, MD, and then to review (2) the NIH report on the upwards number of 212,000 childhood thyroid cancer resulting from all the Nevada above ground nuclear testing from 1952 through 1963; then (3) lastly have your readers peruse the epic work published through the New York Academy of Sciences by Alexi Yablokov, et al. This lengthy article meticulously documents the devastating health consequences from the impaired thyroid glands of hundreds of thousands living near and far away from the Chernobyl catastrophe, plus many other diseases which have resulted Last but most importantly, no critique of the M&S article can be complete without a thorough understanding of (A) the Petkau Effect, (B) the associated “by-stander effect” and (C) the inability of the human body to trigger critical repair effort when the radiation exposure is below a certain threshold of radiation. This means tiny, tiny, tiny amounts of radiation are much, much, much more deadly than significantly higher levels of radiation. Sounds counter-intuitive, right? Well, that because most scientists have not studied these three factors closely enough. Recall that the national Academy of Science has stated repeatedly in their Biological Effects of Ionizing Radiation (BEIR) VII reports (Parts 1 & 2), that there is NO safe level of exposure to radiation. And lastly, to compare a millisecond exposure of radiation from a dental X-ray or medical X-ray to the 24/7 ionizing effects of an internal emitter is the epitome of ignorance. They are apples and oranges. There is NO safe level of radiation exposure, period! Sincerely, Dr. John Apsley (www.doctorapsley.com) John W. Apsley, II, MD(E), ND, DC

It was never my intent to

It was never my intent to trivialize the issue of manmade radioactive elements released into the environment. My concern was with a particular study – the Mangano/Sherman study — and only because it was getting significant traction within the alternative health community for what I believe are invalid reasons. One thing puzzles me about your response to my newsletter, though. All of the points that you address are, in fact, the rather secondary issues that relate to why I did not immediately respond to the study when it was first released – not the primary issue relating to the validity of the study itself. At the moment, that still sits there like the proverbial elephant in the room. I am referring, of course, to the fact that the data concerning the number of deaths in the US as presented in the study do not match the data as presented in the cited CDC source. And what makes it especially disturbing is that it does match selectively. The numbers match perfectly after breach containment, confirming that we are truly accessing the same data source. Where it doesn’t match is before containment. Those numbers are consistently under those listed in the CDC source – and it is that understatement that creates the basis for the entire study. I provided links for people to check the data source for themselves to verify this mismatch. I’m curious as to whether or not you took a look to verify it for yourself. Without the mismatch:

There is no notable jump in deaths.

The study’s primary conclusions are rendered invalid.

And the conclusions then amount to little more than fear mongering – and I really dislike fear mongering. Unfortunately, this taints all other conclusions in the study by association, no matter how true they may ultimately be.

(cont)

(cont)

If there is an

(cont)

If there is an explanation for why those numbers do not match, I would really love to hear it, although I still have a problem with studies that try and extrapolate conclusions based on ALL deaths. As I indicated in the newsletter, in this case it makes radiation responsible for deaths from lightning strikes, automobile accidents, whatever. It makes any conclusions based on such statistics tenuous at best.

That said, I absolutely agree with your conclusion that any exposure to radioactive elements released into the environment is unhealthy. Fortunately, there are things we can do to protect ourselves such as:

Keeping iodine levels up to protect the thyroid

Heavy metal detoxing

Apple pectin and montmorillonite clay

Spirulina and chlorella

And the use of antioxidants or blood cleansers that contain chaparral, which is proven to protect against radioactivity induced genetic damage.

Check out: Fear is the Mind Killer

From KHou news Houston,

From KHou news Houston, TX:

Government caught covering up cancer causing radioactive drinking water

http://www.youtube.com/watch?feature=player_embedded&v=8OAUnPdVtLw

Jon, in your response you

Jon, in your response you gave three important bullet points that were conclusions:

• There is no notable jump in deaths.

• The study’s primary conclusions are rendered invalid.

• And the conclusions then amount to little more than fear mongering – and I really dislike fear mongering. Unfortunately, this taints all other conclusions in the study by association, no matter how true they may ultimately be.

So, we can agree that if the CDC does clearly show a significant jump in deaths, that the study’s primary conclusions are valid, and that this would not be about fear mongering, rather a discussion that educates as to the real dangers, then the means to overcome these dangers, yes?

The discrepancy is not what you believe it is, as it is a matter of how the CDC compiles reported data as it comes in. Each week, a certain number of institutions report to the CDC their tallies, while others for a given week may take a few more weeks to report on their tallies. Accordingly, the CDC updates their records. If a researcher accesses the CDC reports of certain deaths early on, they can get it for, say, the first 14 weeks after the Fukushima Catastrophe. But after they write their report, the CDC will continue to update those weeks records again and again. Why? Because other hospitals or clinics may take months to report for an exact week in question, let alone exact weeks in question. This is why it takes up to 3 years to finalize all the incoming mortality reports for each precise week. Mangano and Sherman are quite clear on this issue, that the “trend” is there, and that it will take until 2014 to tally all data reports fully week by week until there is consensus. In the meantime the trend is clear, exactly as it was found to be after the Chernobyl incident. The CDC showed a trend very early on, and that trend was verified after the finalization of the data 3 years later. I will send you an email with my final paragraph.

Hi Dr. Apsley:

I appreciate

Hi Dr. Apsley:

I appreciate what you're saying, but I still don't buy it. First, if what you say is accurate, then basing conclusions on data which is still in flux is a highly questionable methodology. Or to put it another way: if a government scientist had done the same sort of thing to support a point you disagreed with, you'd be screaming bloody murder. We all would.

But that aside, I still have a problem drawing conclusions about deaths based on fluctuations in total death rates. There can be a number of possible explanations for an increase in deaths — assuming that turns out to be valid — and many of these explanations are at least as viable an explanation as fallout, if not more so. For example, before the Great Depression, suicide rates ran about 18 per 100,000. But at the height of the Depression, they reached 22 per 100,000. That's a difference of 4 per 100,000. Well, we are not in a great depression now, but we are certainly in deeply troubled economic times, and in 2011, many people began to finally lose heart as evidenced by the 3.5 million people who simply stopped looking for work last year and dropped off the unemployment rolls. If we are seeing the same 4 person increase in suicides per 100,000 citizens, that works out to an additional 12,000 deaths per year based on a US population of 300 million.

That pretty much covers the increase that Mangano talks about in his study. I'm not saying that's what happened. But based on the available evidence, it's at least as likely an explanation for the increase in deaths as radioactive fallout.

Again, I'm not saying that radioactive fallout is not a major health problem, or even that it hasn't caused deaths — just that the study referenced above doesn't prove it, not even close.

Jon

The M&S report does not

The M&S report does not “parse” the emerging CDC data incorrectly Jon. At the time Mangano and Sherman evaluated the emerging CDC data, it accurately applied to ~ 25% of the U.S. population, a huge sample base. They knew going in that this same CDC data covering the first 14 week post-Fukushima event would be regularly updated as time went on. They were looking for the early trends, if any, and found them. So, this first initial article will be a series of articles to be written by M&S.

Now, your comparing of CDC data that you gathered and tabulated into chart form, will need revision, because the current CDC data has already rendered yours outdated for those same exact weeks in question. Under such a circumstance, your data table is just as guilty as you suggested the M&S data tables were. Mangano and Sherman fully expected this, and note it in their article. Put another way Jon – you accurately listed the current CDC in the time frame it was taken from, so you did not “massage” any data. For the same reason, neither did Mangano or Sherman massage any data. The CDC data will keep updating itself. Any trained epidemiologist is aware of this. So, your criticism of the M&S article is simply inaccurate in this regard Jon. This was the issue I was trying to gently point out to you. Over time, the CDC data will reflect increasing numbers of those who died across the U.S., and there will fall-out (no pun intended) clearer and clearer trends as to what it is reasonable to ascribe these unexpected increases in deaths to.

This is the revision process.

Best, Dr. John W. Apsley, II

Based on what you say, M&S

Based on what you say, M&S took a snapshot of the data at a particular point in time and were able to identify a trend. I took a snapshot of the data at a later point in time, when it was "more" updated, and the trend was no longer visible. Curiously, M&S happened to pick a point in time that split the updating of the data "precisely" between pre and post Fukishima (and I mean "precisely") — so as to provide the most advantageous numbers for their argument.Very fortuitous for them.